Building an AWS-Style Virtual Private Cloud (VPC) on Linux: A Research-Driven Implementation for Large Networks

Mechanical Engineer by qualification with a strong passion for technology and networking. CCIE Routing & Switching and Security (#22239, since 2008). Former Cisco TAC, HP, and Wipro. Currently focused on building free, impactful tools for India. Ongoing projects include Namohos.com, Anantaos.com, and Freefreecv.com.

Mini VPC using linux box

Abstract

First let me admit my love for AWS and how much I admire them as a networking company. Yes i only look for networking companies. So commercial cloud providers such as AWS deliver highly abstracted Virtual Private Cloud (VPC) environments that encapsulate routing, security, subnetting, NAT, firewalls, load balancing, and monitoring. This research project demonstrates how to replicate the core functionality of an AWS VPC using standard Linux components. The design emphasizes scalability, isolation, multi-subnet routing, and high availability for large networks.

1. Introduction

AWS VPCs offer a programmable, software-defined network where instances operate in logically isolated subnets. While proprietary, the underlying architecture can be reproduced using open-source Linux tools such as:

Linux network namespaces (compute environment isolation)

VRF (Virtual Routing and Forwarding)

iptables/nftables (security groups, NAT, routing policies)

dnsmasq/ISC DHCP (DHCP/DNS)

FRRouting (FRR) for scalable routing

Open vSwitch for SDN-style switching

WireGuard / OpenVPN for VPN-style connectivity

This project constructs a fully operational VPC with public, private, and isolated subnets, NAT gateway equivalents, and routing tables—mirroring AWS design principles.

2. VPC Architecture Overview

2.1 Core Components

| AWS Component | Linux Equivalent |

| VPC CIDR | Linux VRF + routing tables |

| Public Subnet | Namespace with Internet-routable NAT access |

| Private Subnet | Namespace routed to NAT gateway |

| Route Tables | ip route, VRFs, FRR |

| Internet Gateway | iptables MASQUERADE on main host |

| NAT Gateway | Separate namespace with iptables |

| Security Groups | nftables per-namespace rules |

| Network ACLs | VRF-level rules |

| DHCP | dnsmasq per subnet |

| VPC Peering | VRF-to-VRF routing via FRR |

| Site-to-Site VPN | WireGuard |

3. VPC Design for Large Networks

We use the following scalable CIDR plan:

VPC CIDR:

10.0.0.0/16Public Subnet A:

10.0.1.0/24Private Subnet A:

10.0.2.0/24Private Subnet B:

10.0.3.0/24DMZ Subnet:

10.0.10.0/24

Scalability considerations:

Each subnet runs in an isolated namespace.

A dedicated NAT namespace serves private subnets.

VRF instances separate routing domains, allowing >100 subnets without table conflict.

FRR (BGP/OSPF) can be added for multi-node VPCs.

4. Implementation

4.1 Create the VRF Backbone

sudo ip link add vpc-vrf type vrf table 100

sudo ip link set vpc-vrf up

Bind interfaces & namespaces later.

4.2 Create Network Namespaces (Subnets)

sudo ip netns add pub-a

sudo ip netns add priv-a

sudo ip netns add priv-b

sudo ip netns add dmz

sudo ip netns add natgw

4.3 Create Virtual Switch (Open vSwitch recommended)

sudo ovs-vsctl add-br vpc-br0

Add veth pairs per subnet:

for ns in pub-a priv-a priv-b dmz natgw; do

sudo ip link add veth-$ns type veth peer name veth-$ns-br

sudo ip link set veth-$ns netns $ns

sudo ovs-vsctl add-port vpc-br0 veth-$ns-br

sudo ip netns exec $ns ip link set veth-$ns up

sudo ip link set veth-$ns-br up

done

4.4 Assign IPs to Subnets

Example public subnet:

sudo ip netns exec pub-a ip addr add 10.0.1.1/24 dev veth-pub-a

sudo ip netns exec pub-a ip link set lo up

Private subnets:

sudo ip netns exec priv-a ip addr add 10.0.2.1/24 dev veth-priv-a

sudo ip netns exec priv-a ip link set lo up

Repeat for all.

4.5 Configure dnsmasq for DHCP

Example for private subnet A:

/etc/dnsmasq.d/priv-a.conf

interface=veth-priv-a

dhcp-range=10.0.2.50,10.0.2.200,12h

Enable:

sudo ip netns exec priv-a dnsmasq --conf-file=/etc/dnsmasq.d/priv-a.conf

4.6 Implement the Internet Gateway

On host OS:

sudo sysctl -w net.ipv4.ip_forward=1

sudo iptables -t nat -A POSTROUTING -o eth0 -j MASQUERADE

This is equivalent to AWS IGW.

4.7 Create NAT Gateway Namespace

sudo ip netns exec natgw ip addr add 10.0.255.1/24 dev veth-natgw

sudo ip netns exec natgw iptables -t nat \

-A POSTROUTING -o veth-natgw -j MASQUERADE

4.8 Route Private Subnets to NAT Gateway

Inside each private subnet:

sudo ip netns exec priv-a ip route add default via 10.0.255.1

4.9 Add VRF Routing Policies

Bind bridge interface to VRF:

sudo ip link set vpc-br0 master vpc-vrf

Add subnet routes:

sudo ip route add 10.0.1.0/24 dev vpc-br0 table 100

sudo ip route add 10.0.2.0/24 dev vpc-br0 table 100

sudo ip route add 10.0.3.0/24 dev vpc-br0 table 100

sudo ip route add 10.0.10.0/24 dev vpc-br0 table 100

Global rule:

sudo ip rule add iif vpc-vrf table 100

4.10 Security Groups via nftables

Example allow-only SSH:

sudo nft add table inet sg-privA

sudo nft add chain inet sg-privA input \

'{ type filter hook input priority 0; }'

sudo nft add rule inet sg-privA input tcp dport 22 accept

sudo nft add rule inet sg-privA input drop

Apply to namespace:

sudo ip netns exec priv-a nft -f sg-privA.nft

5. Testing the VPC

5.1 Ping Between Subnets

pub-a → priv-a

sudo ip netns exec pub-a ping 10.0.2.1

5.2 Public Subnet Internet Test

sudo ip netns exec pub-a ping 8.8.8.8

5.3 Private Subnet Internet Test via NAT Gateway

sudo ip netns exec priv-a ping 8.8.8.8

6. Scaling for Large Network Environments

6.1 Add 50+ Subnets

Automate:

for i in {11..60}; do

# create namespace, veth, ip, DHCP config automatically

done

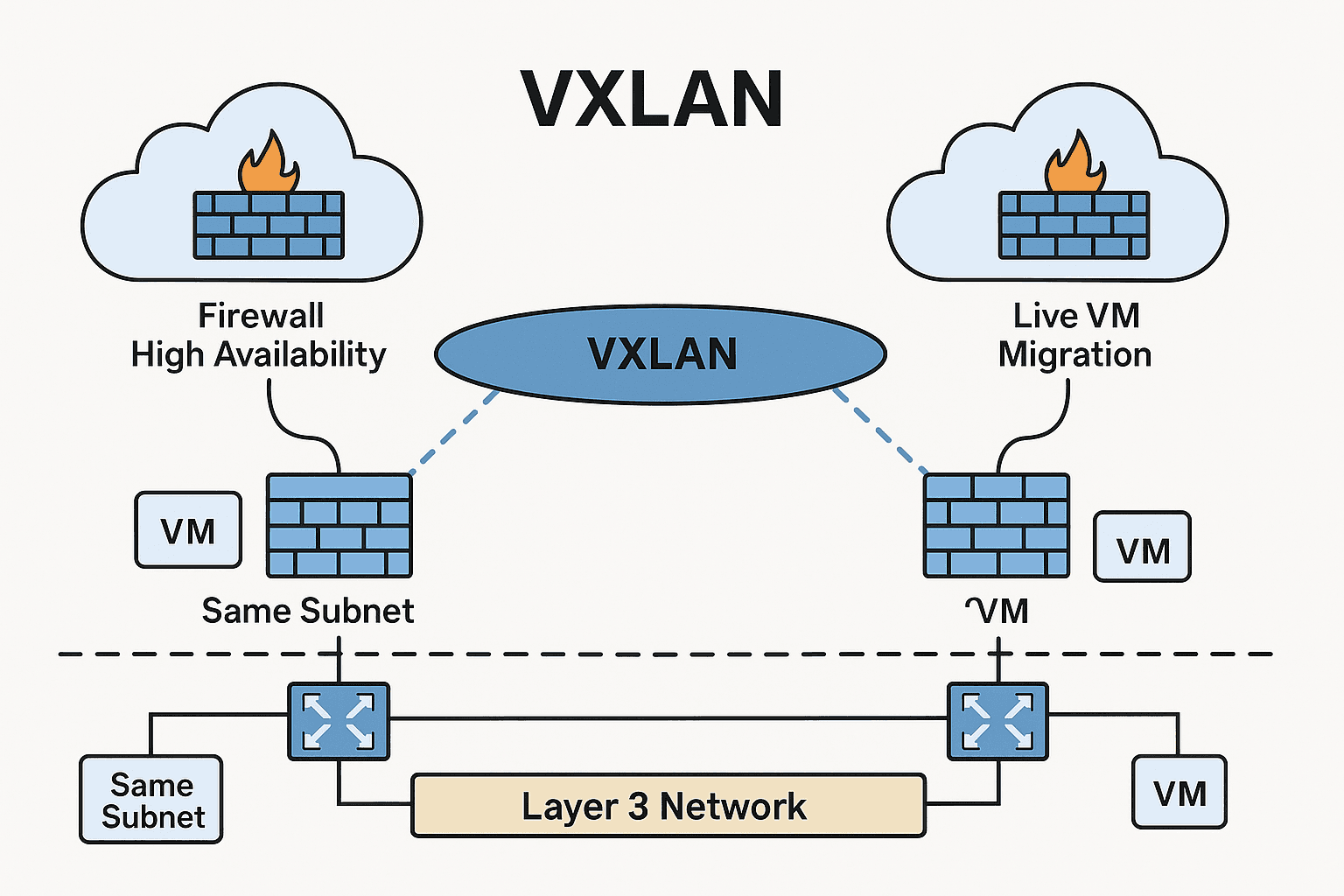

6.2 Distributed Multi-Node VPC

Use FRRouting (BGP) between nodes:

Node A advertises

10.0.0.0/16Node B advertises

10.1.0.0/16Dynamic routing = automatic VPC peering

6.3 High-Availability NAT Gateway

Run two NAT gateways + keepalived for VIP failover.

6.4 VPN Attachment

WireGuard tunnel to on-prem:

sudo ip netns exec dmz wg-quick up vpc-vpn

i know,

What you are thinking is, can this handle a massive load of thousands of servers? To your surprise, the answer is yes, if it's built on my routing project www.namahos.com. Actually, a lot of the performance of these solutions depends on the Linux version, kernel programming, fine-tuning, optimization, and underlying hardware. which is actually our discussion for some other day. Cheers